Motivation

Chatbots are among the most popular applications of large language models (LLMs). Often, an LLM's internal knowledge base is adequate for answering users questions. However, in those cases, the model may generate outdated, incorrect, or too generic responses when specificity is expected. These challenges can be partially addressed by supplementing the LLM with an external knowledge base and employing the retrieval-augmented generation (RAG) technique.

However, if user queries are complex, it may be necessary to break the task into several sub-parts. In such cases, relying solely on the RAG technique may not be sufficient, and the use of agents may be required.

The fundamental concept of agents involves using a language model to determine a sequence of actions (including the usage of external tools) and their order. One possible action could be retrieving data from an external knowledge base in response to a user's query. In this tutorial, we will develop a simple Agent that accesses multiple data sources and invokes data retrieval when needed. We will use a Dingo framework that allows the development of LLM pipelines and autonomous agents.

To follow the tutorial, a basic familiarity with a RAG framework is needed.

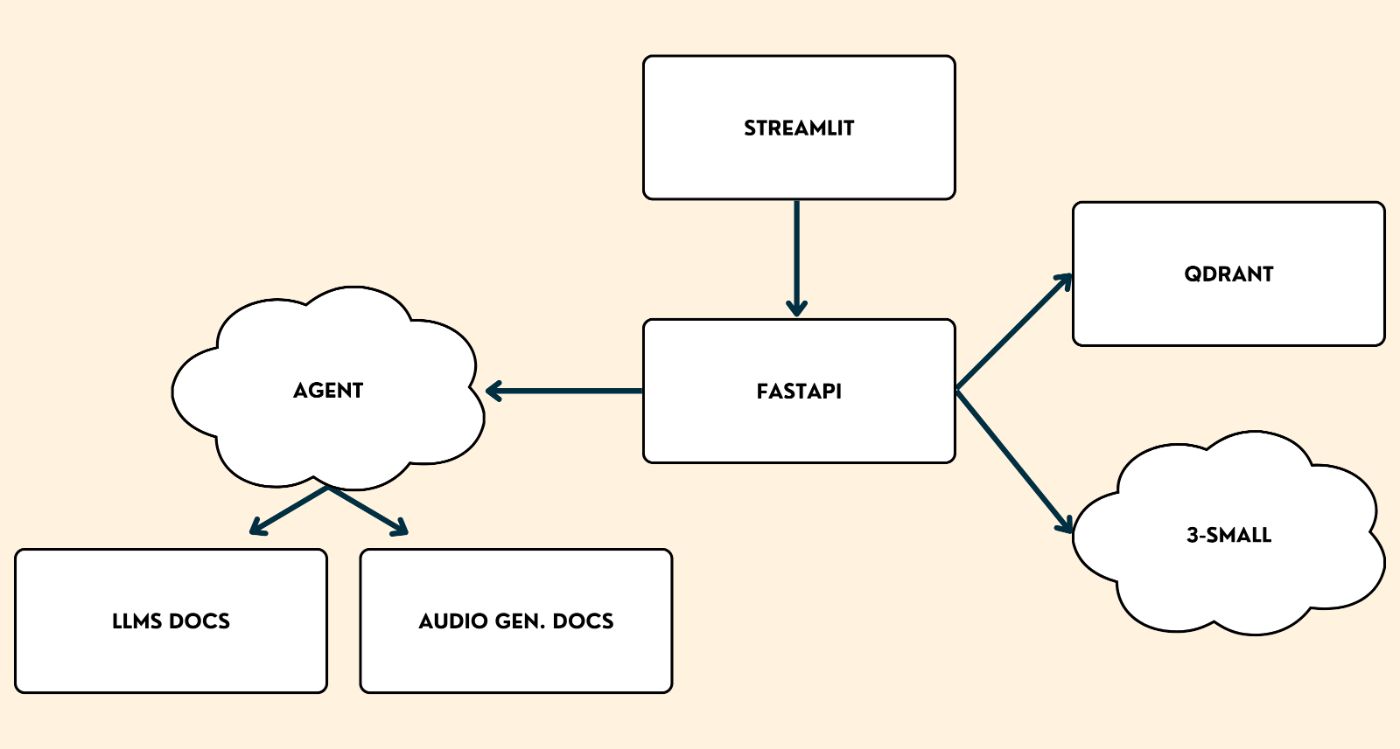

RAG Agent Architecture and Technical Stack

The application will consist of the following components:

-

Streamlit: provides a frontend interface for users to interact with a chatbot. -

FastAPI: facilitates communication between the frontend and backend. -

Dingo Agent: agent powered by GPT-4 Turbo model from OpenAI that has access to provided knowledge bases and invokes data retrieval from them if needed.

-

LLMs docs: a vector store containing documentation about the recently released Phi-3 (from Microsoft) and Llama 3 (from Meta) models.

-

Audio gen docs: a vector store containing documentation about the recently released OpenVoice model from MyShell.

-

Embedding V3 smallmodel from OpenAI: computes text embeddings. -

QDrant: vector database that stores embedded chunks of text.

Implementation

Step 0:

Install the Dingo framework:

pip install agent-dingo

Set the OPENAI_API_KEY environment variable to your OpenAI API key:

export OPENAI_API_KEY=your-api-key

Step 1:

Create a component.py file, and initialize an embedding model, a chat model, and two vector stores: one for storing documentation of Llama 3 and Phi-3, and another for storing documentation of OpenVoice.

# components.py

from agent_dingo.rag.embedders.openai import OpenAIEmbedder

from agent_dingo.rag.vector_stores.qdrant import Qdrant

from agent_dingo.llm.openai import OpenAI

# Initialize an embedding model

embedder = OpenAIEmbedder(model="text-embedding-3-small")

# Initialize a vector store with information about Phi-3 and Llama 3 models

llm_vector_store = Qdrant(collection_name="llm", embedding_size=1536, path="./qdrant_db_llm")

# Initialize a vector store with information about OpenVoice model

audio_gen_vector_store = Qdrant(collection_name="audio_gen", embedding_size=1536, path="./qdrant_db_audio_gen")

# Initialize an LLM

llm = OpenAI(model = "gpt-3.5-turbo")

Step 2

Create a build.py file. Parse, chunk into smaller pieces, and embed websites containing documentation of the above-mentioned models. The embedded chunks are used to populate the corresponding vector stores.

# build.py

from components import llm_vector_store, audio_gen_vector_store, embedder

from agent_dingo.rag.readers.web import WebpageReader

from agent_dingo.rag.chunkers.recursive import RecursiveChunker

# Read the content of the websites

reader = WebpageReader()

phi_3_docs = reader.read("https://azure.microsoft.com/en-us/blog/introducing-phi-3-redefining-whats-possible-with-slms/")

llama_3_docs = reader.read("https://ai.meta.com/blog/meta-llama-3/")

openvoice_docs = reader.read("https://research.myshell.ai/open-voice")

# Chunk the documents

chunker = RecursiveChunker(chunk_size=512)

phi_3_chunks = chunker.chunk(phi_3_docs)

llama_3_chunks = chunker.chunk(llama_3_docs)

openvoice_chunks = chunker.chunk(openvoice_docs)

# Embed the chunks

for doc in [phi_3_chunks, llama_3_chunks, openvoice_chunks]:

embedder.embed_chunks(doc)

# Populate LLM vector store with embedded chunks about Phi-3 and Llama 3

for chunk in [phi_3_chunks, llama_3_chunks]:

llm_vector_store.upsert_chunks(chunk)

# Populate audio gen vector store with embedded chunks about OpenVoice

audio_gen_vector_store.upsert_chunks(openvoice_chunks)

Run the script:

python build.py

At this step, we have successfully created vector stores.

Step 3:

Create serve.py file, and build a RAG pipeline. To access the pipeline from the Streamlit application, we can serve it using the serve_pipeline function, which provides a REST API compatible with the OpenAI API.

# serve.py

from agent_dingo.agent import Agent

from agent_dingo.serve import serve_pipeline

from components import llm_vector_store, audio_gen_vector_store, embedder, llm

agent = Agent(llm, max_function_calls=3)

# Define a function that an agent can call if needed

@agent.function

def retrieve(topic: str, query: str) -> str:

"""Retrieves the documents from the vector store based on the similarity to the query.

This function is to be used to retrieve the additional information in order to answer users' queries.

Parameters

----------

topic : str

The topic, can be either "large_language_models" or "audio_generation_models".

"large_language_models" covers the documentation of Phi-3 family of models from Microsoft and Llama 3 model from Meta.

"audio_generation_models" covers the documentation of OpenVoice voice cloning model from MyShell.

Enum: ["large_language_models", "audio_generation_models"]

query : str

A string that is used for similarity search of document chunks.

Returns

-------

str

JSON-formatted string with retrieved chunks.

"""

print(f'called retrieve with topic {topic} and query {query}')

if topic == "large_language_models":

vs = llm_vector_store

elif topic == "audio_generation_models":

vs = audio_gen_vector_store

else:

return "Unknown topic. The topic must be one of `large_language_models` or `audio_generation_models`"

query_embedding = embedder.embed(query)[0]

retrieved_chunks = vs.retrieve(k=5, query=query_embedding)

print(f'retrieved data: {retrieved_chunks}')

return str([chunk.content for chunk in retrieved_chunks])

# Create a pipeline

pipeline = agent.as_pipeline()

# Serve the pipeline

serve_pipeline(

{"gpt-agent": pipeline},

host="127.0.0.1",

port=8000,

is_async=False,

)

Run the script:

python serve.py

At this stage, we have an openai-compatible backend with a model named gpt-agent, running on http://127.0.0.1:8000/. The Streamlit application will send requests to this backend.

Step 4:

Create app.py file, and build a chatbot UI:

# app.py

import streamlit as st

from openai import OpenAI

st.title("🦊 Agent")

# provide any string as an api_key parameter

client = OpenAI(base_url="http://127.0.0.1:8000", api_key="123")

if "openai_model" not in st.session_state:

st.session_state["openai_model"] = "gpt-agent"

if "messages" not in st.session_state:

st.session_state.messages = []

for message in st.session_state.messages:

avatar = "🦊" if message["role"] == "assistant" else "👤"

with st.chat_message(message["role"], avatar=avatar):

st.markdown(message["content"])

if prompt := st.chat_input("How can I assist you today?"):

st.session_state.messages.append({"role": "user", "content": prompt})

with st.chat_message("user", avatar="👤"):

st.markdown(prompt)

with st.chat_message("assistant", avatar="🦊"):

stream = client.chat.completions.create(

model=st.session_state["openai_model"],

messages=[

{"role": m["role"], "content": m["content"]}

for m in st.session_state.messages

],

stream=False,

)

response = st.write_stream((i for i in stream.choices[0].message.content))

st.session_state.messages.append({"role": "assistant", "content": response})

Run the application:

streamlit run app.py

🎉 We have successfully built an Agent that is augmented with the technical documentation of several newly released generative models and can retrieve information from these documents if necessary. Let’s ask some technical questions, and check the generated output:

Conclusion

In this tutorial, we have developed a RAG agent that can access external knowledge bases, selectively decide whether to access the external data, which data source to use (and how many times), and how to rewrite the user's query before retrieving the data.

It can be seen that the Dingo framework enhances the development of LLM-based applications by allowing developers to quickly and easily create application prototypes.