Rapid developments in the field of artificial intelligence require handling this technology with cautious. While there might have been initial concerns about the pace of these advancements, and the potential of stronger AI models used for unethical commercial use, we now have the opportunity to shape the future of AI and ensure it’s used ethically and for good, and the way to get there goes through Brussels.

The EU AI Act

The biggest advancement in AI regulation happened in December 2023. The European Parliament and the Council of the EU reached a political agreement on the AI Act. The AI Act is the first-ever legal framework on AI, which addresses the risks of AI and positions Europe to play a leading role globally. One might think that AI regulation in Europe alone is not sufficient. But it might just be enough thanks to a phenomenon is known as the “Brussels Effect.”

The “Brussels Effect”

The “Brussels Effect” refers to the tendency of multinational companies to conform to the stricter rules and regulations set by the EU across their entire business, rather than maintaining different standards for the European market and the rest of the world. This is often driven by the desire to avoid the costs and complexities associated with maintaining multiple product lines or processes.

The term was coined by Yale Law School professor Anu Bradford in her 2020 book “The Brussels Effect: How the European Union Rules the World.” It highlights the EU’s ability to de facto export its regulations and standards to other markets and countries, given the economic importance and size of the European market.

The General Data Protection Regulation (GDPR) is a prime example of the “Brussels Effect” in action. While the GDPR grants European citizens the right to access all the personal data collected about them by companies, its impact extends far beyond the EU’s borders. Due to the complexities of maintaining separate data handling processes, many global tech giants grant the GDPR’s data privacy protections to all their users worldwide, regardless of their location.

Side note: You can extract all the information Google collected on you for example here:

Another example is the case of the USB-C charging mandate. Apple’s decision to adopt this standard globally for iPhones, rather than just for the EU market, is a clear example of the “Brussels Effect” in action.

So, if judging by these examples, not all is lost. I would go as far as saying that the regulations suggested in the EU AI Act might set the boundaries and rules for the AI industry worldwide for years to come.

A Closer Look at the EU AI Act

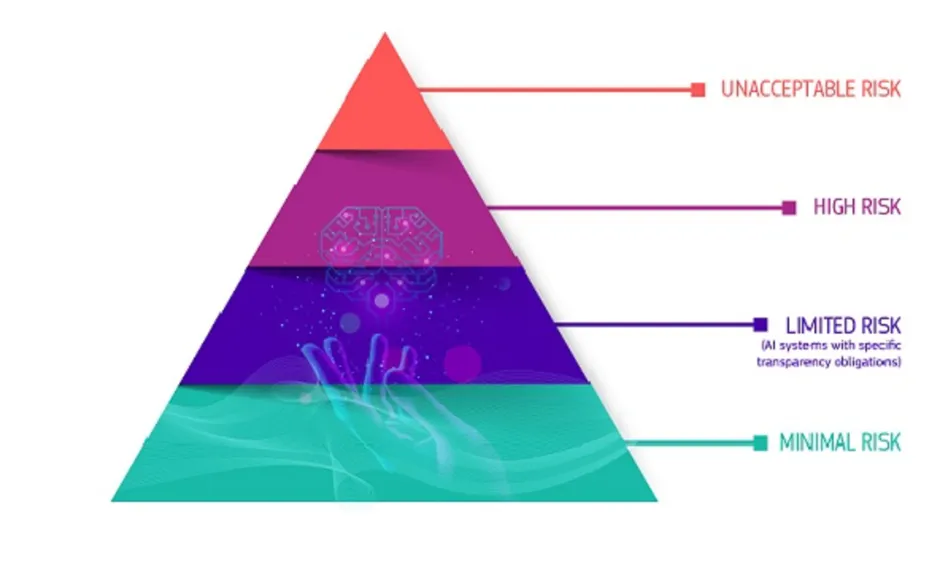

Let’s go over the ideas in the EU AI Act briefly. The main idea is to categorize AI systems to 4 levels of risk.

It bans AI applications considered a clear threat to safety and human rights, such as social scoring by governments or toys using voice assistance that encourages dangerous behaviour.

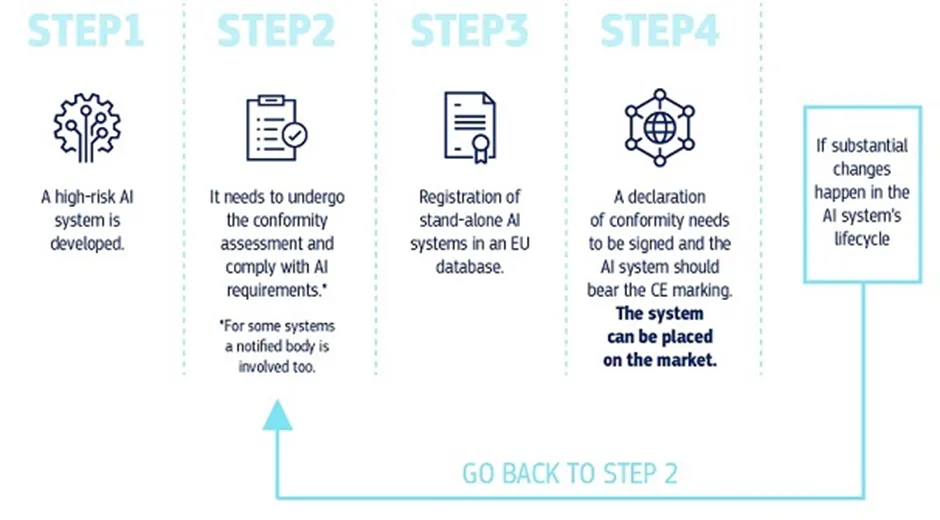

High Risk AI systems are ones that use AI, for example in transportation, robot-assisted surgery or automated examination of visa applications etc. High-risk AI systems used in these critical areas will face strict requirements before market deployment. These include risk assessment, high data quality, human oversight, robustness, and documentation.

For AI systems posing limited risks, such as chatbots or AI-generated content, the framework mandates transparency obligations to inform users about their artificial nature.

AI systems that are labeled as minimal-risk, include applications like AI-enabled video games and spam filters, can be freely utilized without restrictions. These AI systems represent the vast majority of AI applications currently deployed across the EU.

The Road Ahead

To conclude, this risk-based framework might very well shape the future of AI, the way companies and users view AI systems and the roles they will function in our lives.

Several questions raised from this piece of legislation are: How will the risk levels be decided? Will these risk levels and their definitions change often? How will it impact the the development and adoption of such technologies?

As the AI landscape continues to evolve, ongoing dialogue and refinement will be crucial to ensure that the regulatory framework remains effective, ethical, and adaptable to technological advancements. We’ll find out sooner than later if indeed the path of the future of AI goes through Brussels.

References

https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai?embedable=true